The history and evolution of computers is an extraordinary tale of innovation, discovery, and technological progress that has transformed how we live, work, and communicate. From the earliest mechanical devices to today’s powerful supercomputers, the journey of computer development is a fascinating one, marked by key milestones and breakthroughs.

1. The Dawn of Computing: Early Machines

The story of computers dates back to the early 19th century, when Charles Babbage, an English mathematician and inventor, conceptualized the Analytical Engine. Often referred to as the “father of the computer,” Babbage designed a mechanical device that could perform complex calculations. Though never completed in his lifetime, the Analytical Engine laid the foundation for the modern computer by introducing concepts like memory storage, a central processing unit (CPU), and an input/output mechanism.

Around the same time, Ada Lovelace, an English mathematician, wrote the first algorithm intended for implementation on the Analytical Engine, making her the first computer programmer.

2. The Early 20th Century: From Mechanical to Electronic

The 20th century saw significant strides in computing technology. The first truly electronic computers were developed during and after World War II. One of the most notable machines was the ENIAC (Electronic Numerical Integrator and Computer), completed in 1945. It was a massive machine, occupying a large room and weighing over 27 tons. ENIAC could perform thousands of calculations per second, a monumental achievement at the time.

Shortly after, the UNIVAC I (Universal Automatic Computer I), the first commercially available computer, was introduced. It was used for tasks such as census data processing and forecasting.

3. The Rise of Transistors and Integrated Circuits

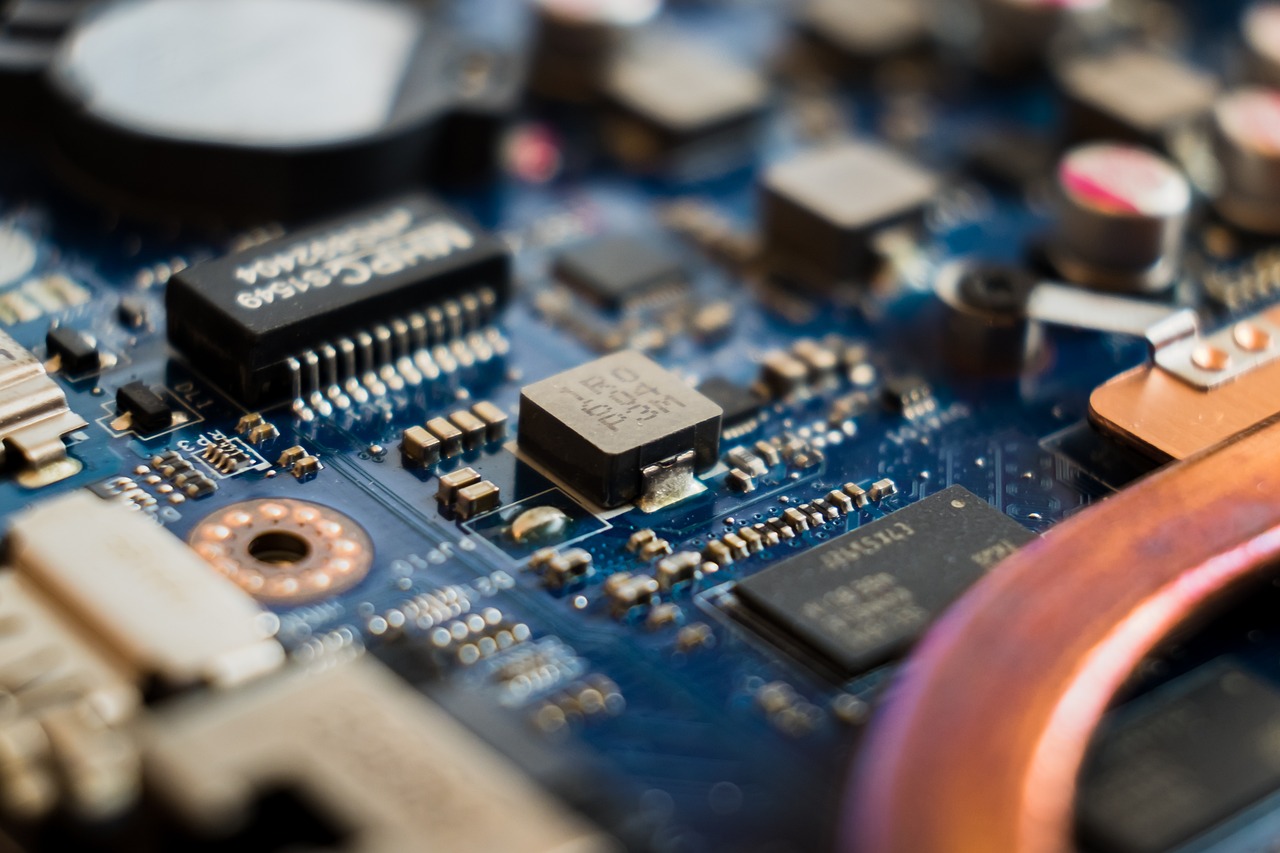

In the 1950s and 1960s, computers became smaller, faster, and more reliable due to the invention of the transistor. These tiny electronic switches replaced the bulky vacuum tubes used in earlier computers, making machines more compact and energy-efficient. This led to the development of the second generation of computers.

In the late 1950s, the introduction of integrated circuits (ICs) further accelerated computer development. ICs allowed for the integration of thousands of transistors on a single chip, significantly reducing size and cost while boosting performance.

4. The Personal Computer Revolution

The 1970s and 1980s marked a pivotal point in the evolution of computers with the emergence of personal computers (PCs). In 1975, the Altair 8800 became the first commercially successful microcomputer, sparking the personal computer revolution. Shortly thereafter, companies like Apple, IBM, and Microsoft led the charge in making computers more accessible to the general public.

The introduction of the Apple II in 1977 and the IBM PC in 1981 revolutionized how people interacted with computers. These machines were affordable, user-friendly, and compact enough to be used in homes and small businesses, opening up new possibilities for education, work, and entertainment.

5. The Internet Era: Connecting the World

The 1990s saw the emergence of the Internet, which radically changed the way people used computers. The advent of the World Wide Web by Tim Berners-Lee in 1991, along with browsers like Netscape Navigator and Internet Explorer, made information and communication more accessible than ever.

The rise of the internet also led to the development of e-commerce, social media, and cloud computing, creating new industries and business models. Computer networks and internet connectivity became integral parts of modern life.

6. Modern Day: Artificial Intelligence and Supercomputers

Today, computers are not only powerful tools for computation but also for processing vast amounts of data, driving innovations in artificial intelligence (AI), machine learning, and big data. Computers are now at the heart of industries ranging from healthcare and finance to entertainment and autonomous vehicles.

Supercomputers like Fugaku, currently one of the world’s fastest supercomputers, are capable of performing quadrillions of calculations per second. These machines play crucial roles in scientific research, climate modeling, and simulating complex systems.

Moreover, modern computers are increasingly compact, with devices like smartphones, tablets, and wearables bringing powerful computing capabilities into our pockets. The shift toward cloud computing has also allowed individuals and businesses to access computing resources remotely, making it easier to collaborate and store vast amounts of data.

7. The Future of Computing: Quantum Computing and Beyond

Looking ahead, the future of computing seems even more exciting. Quantum computing, which harnesses the principles of quantum mechanics, promises to revolutionize industries by solving problems that are currently impossible for classical computers to tackle. Companies and research institutions worldwide are racing to build the first viable quantum computers, with the potential to disrupt fields like cryptography, drug discovery, and material science.

Additionally, advancements in neuromorphic computing and bio-computing could lead to machines that mimic human brains, opening up new frontiers in artificial intelligence and cognitive computing.

Conclusion: A Journey of Innovation

The evolution of computers has been marked by continuous innovation and progress. From the early mechanical devices to the powerful, interconnected systems we rely on today, computers have come a long way. As technology continues to advance, it’s clear that the future of computing will bring even more incredible changes, making the possibilities for human progress nearly limitless.

For a comprehensive buying guide, check out our blog, “How to Choose the Best Computer in 2025: Top Laptops & Desktops for Work, Gaming, and More.”